613 words

3 minutes

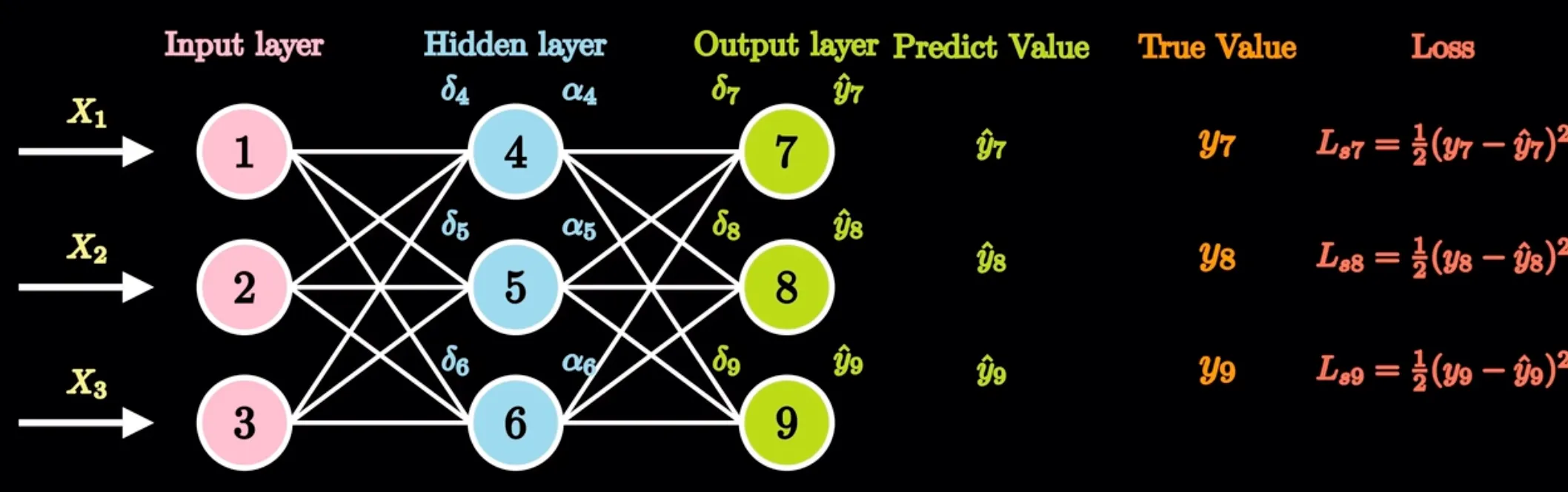

神经网络的正反向传播

符号含义

输入层

- 输入:

隐藏层

- 输入:

- 激活值:

输出层

- 输入:

- 预测值:

其他

- 真实值:

- 学习率:

- 激活函数: ,

- 损失函数:,

- 权重: (如神经元1, 4, 权重)

正向传播

已知内容

输入数据:输入层的 、、, 它们是网络接收的初始信息.

权重: (如神经元1, 4, 权重), 决定了信号传递的强度.

激活函数: ,

损失函数:

真实值:即 、、 , 可用于后续评估预测结果, 但不参与正向传播计算本身.

需求内容

- 隐藏层激活值: 、、 这类隐藏层神经元经过加权求和并通过激活函数后的输出值.

- 输出层预测值: 、、 , 这是网络针对输入数据给出的最终预测结果, 是正向传播的主要计算目标.

- 损失值(间接需求):虽然不是正向传播直接计算得出, 但正向传播得到预测值后, 结合真实值(如 、、 ), 利用损失函数(如 、、 对应的均方误差公式 )计算出损失值, 用于评估模型性能和后续反向传播优化

反向传播

在这张图所示的神经网络反向传播过程中:

已知内容

正向传播结果:包括隐藏层激活值(如、、)和输出层预测值(如、、)

真实值:、、

学习率:

损失函数:

权重: (如神经元1, 4, 权重), 决定了信号传递的强度.

激活函数: ,

要求内容

- 更新权重: 根据计算得到的权重梯度和学习率, 对权重进行更新

更新隐藏层和输出层的权重 (举例神经元4)

更新输入层和隐藏层的权重 (举例神经元1)